網頁爬蟲 - Python3.6 下的爬蟲總是重復爬第一頁的內容

問題描述

問題如題:改成while,試了很多,然沒有效果,請教大家

# coding:utf-8# from lxml import etreeimport requests,lxml.html,osclass MyError(Exception): def __init__(self, value):self.value = value def __str__(self):return repr(self.value) def get_lawyers_info(url): r = requests.get(url) html = lxml.html.fromstring(r.content) # phones = html.xpath(’//span[@class='law-tel']’) phones = html.xpath(’//span[@class='phone pull-right']’) # names = html.xpath(’//p[@class='fl']/p/a’) names = html.xpath(’//h4[@class='text-center']’) if(len(phones) == len(names)):list(zip(names,phones))phone_infos = [(names[i].text, phones[i].text_content()) for i in range(len(names))] else:error = 'Lawyers amount are not equal to the amount of phone_nums: '+urlraise MyError(error) phone_infos_list = [] for phone_info in phone_infos:if(phone_info[0] == ''): info = '沒留姓名'+': '+phone_info[1]+'rn'else: info = phone_info[0]+': '+phone_info[1]+'rn'print (info)phone_infos_list.append(info) return phone_infos_listdir_path = os.path.abspath(os.path.dirname(__file__))print (dir_path)file_path = os.path.join(dir_path,'lawyers_info.txt')print (file_path)if os.path.exists(file_path): os.remove(file_path)with open('lawyers_info.txt','ab') as file: for i in range(1000):url = 'http://www.xxxx.com/cooperative_merchants?searchText=&industry=100&provinceId=19&cityId=0&areaId=0&page='+str(i+1)# r = requests.get(url)# html = lxml.html.fromstring(r.content)# phones = html.xpath(’//span[@class='phone pull-right']’)# names = html.xpath(’//h4[@class='text-center']’) # if phones or names:info = get_lawyers_info(url)for each in info: file.write(each.encode('gbk'))

問題解答

回答1:# coding: utf-8import requestsfrom pyquery import PyQuery as Qurl = ’http://www.51myd.com/cooperative_merchants?industry=100&provinceId=19&cityId=0&areaId=0&page=’with open(’lawyers_info.txt’, ’ab’) as f: for i in range(1, 5):r = requests.get(’{}{}’.format(url, i))usernames = Q(r.text).find(’.username’).text().split()phones = Q(r.text).find(’.phone’).text().split()print zip(usernames, phones)

相關文章:

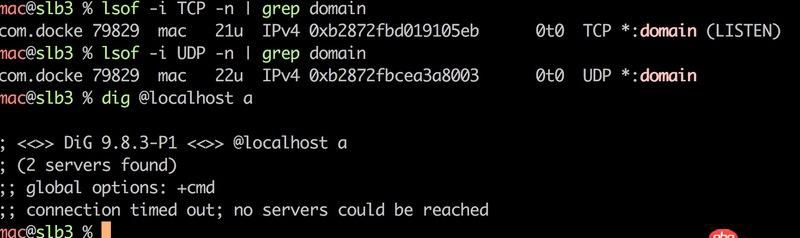

1. phpstudy8.1沒集成mysql-front2. 關docker hub上有些鏡像的tag被標記““This image has vulnerabilities””3. node.js - mongodb查找子對象的名稱為某個值的對象的方法4. docker鏡像push報錯5. Docker for Mac 創建的dnsmasq容器連不上/不工作的問題6. 前端 - @media query 使用出現的問題?7. 利用IPMI遠程安裝centos報錯!8. docker 下面創建的IMAGE 他們的 ID 一樣?這個是怎么回事????9. html5 - datatables 加載不出來數據。10. javascript - 在 model里定義的 引用表模型時,model為undefined。

網公網安備

網公網安備